Palantir UK Boss Says It’s Up to Militaries to Decide How AI Targeting is Used in War

As the integration of Artificial Intelligence into global warfare accelerates at a breakneck pace, the technology companies building these modern digital arsenals are drawing a definitive line in the sand regarding ethical liability.

Speaking on the rapid deployment of algorithmic tools in combat, the UK chief of Palantir—the prominent data analytics and defense software contractor—made a bold declaration. He stated unequivocally that the responsibility for AI targeting decisions rests solely with military commanders, stepping away from industry accountability.

The Ethics of Algorithmic Combat

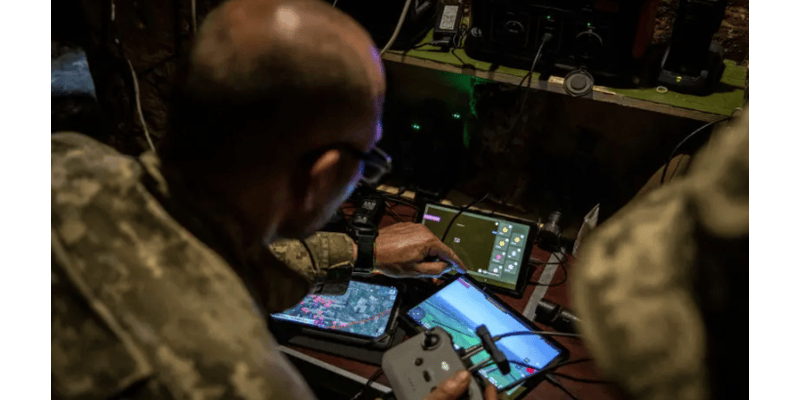

These comments arrive at a time of heightened global tension. Armed forces around the world are increasingly relying on AI-assisted drone operations and automated targeting systems in active conflict zones.

The core argument from defense contractors like Palantir is straightforward:

- The Tech Role: Software companies provide the infrastructure, the algorithms, and the raw analytical capability to process battlefield data at superhuman speeds.

- The Military Role: The rules of engagement, the final human oversight, and the moral weight of a lethal strike remain entirely with the armed forces executing the mission.

A Growing Global Debate

Critics and human rights advocates, however, argue that software developers cannot simply wash their hands of accountability. They warn that AI targeting in war is fraught with unprecedented risks.

Algorithms can suffer from inherent bias, faulty data training, or digital “hallucinations” that could directly lead to unintended civilian casualties. As the fog of war becomes increasingly digitized and fast-paced, the debate over who takes the blame when a machine makes a fatal error is reaching a boiling point. Tech giants and international tribunals alike will soon have to define the exact boundaries of algorithmic warfare.

No Comment! Be the first one.